One Month at Hazy

1 Month: Deadlines

This post is 11 days late, if measured in fiscal months; 1 day late, by business day-months; and right on time, if by business day-weeks. Further, I'm not even sure if "late" would apply here: nobody told me to write this post - equally probable, nobody wants me to write this post. I simply chose a "month" as a good time to post an update to my previous one week at hazy. I can't give you a numerical proof why a month is a good time. It just seemed reasonable.

I'm going somewhere with this.

Measures

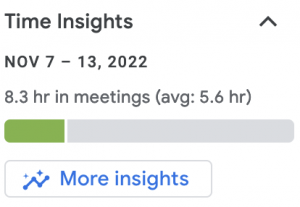

I ocasionally really like Google. In this case, they've added a very simple, but very nice insight widget to GCal:

Since I've started it's looked like

| week | hours | avg | avg (cummulative, actual) |

|---|---|---|---|

| 0 | 8.8 | 0.3* | 8.8 |

| 1 | 7.0 | 3.1 | 7.9 |

| 2 | 4.2 | 5.3 | 6.67 |

| 3 | 5.5 | 6.7 | 6.375 |

| 4 | 8.3 | 5.6 | 6.76 |

* I had to look back in the calendar, it seems like Google applies a default of 0.3hrs retroactively to accounts. Meaning the avg. is a bit rubbish. So I've added a corrected, cummulative column manually. Ya had something good goin' for ya Google, and ya squandered it :(

Depending on your perspective, ~1 day per week in meetings may be a lot, or a little. In my case, this first month, the vast majority of those meeting hours were either (1) 1:1 meet and greets ("coffee" chats), (2) scheduled by myself as pair programming or planning, or (3) scheduled but not fully observed (standup cancelled, meeting ended early). From my perspective, having 4 (four FULL) days a week of meeting-free time is hard to grok. To be clear, my previous role was amazing, but it came at a cost: frequently 20-25 hours per week of meetings. So, when I joined hazy I said my number one goal was to "code more". I can happily say it's absolutely easier to achieve that goal when I can dedicate ~80% of my time to it, uninterrupted.

I point this out, because it's a really common complaint in tech. Big CorpTM is renowned for calendar cramming of engineer's precious (and let's be pragmatic, expensive) time.

Hazy, not so. In fact, I've felt a bit guilty about (2) above, as I think there is the opposite of a meeting culture here: an anti-meeting attitude.

(sadly lacking tracked measures) From 5 weeks of working here, I can confirm that people happily take meetings - but they also happily hang up as soon as the important stuff is said/agreed/delegated. That is to say, meetings are efficient and the focus is on working, not bikeshedding.

Benchmarks

What you're measuring matters. Did this post deliever on time for a "month"? Should we trust Google's average weekly meeting hours? (Yes, No, respectively)

In the AI field, we have to spend a huge amount of effort on asking these measurement questions. That is, before asking "how good" a given model may be, you need to know that you're measuring the right thing. And, like, fiscal/calendar/business months, the measurement isn't always immediately clear.

Thus, I've begun my first project, in earnest, now: benchmarking code performance measures. That is to say, when we create a model for you, how do we say that it's a "good" model, how do we know that it's good? What even is goodness?

Upping my PR Game

I mentioned in my first post that I was going to take it a bit slow pushing code. That's seemingly paid off, as it gave me time to explore a handful of tooling that has been on my backlog for quite some time (but for one business reason or other I haven't been able to incorporate into a real production-focussed project),

- Data Pipelining Kedro

- Experiment Tracking with MLFlow

- Data versioning (and more) with DVC

- Some other stuff

No time was wasted (bikeshedding) here. A challenger PoC was coded up, evaluated (see (2) above), and approved.

All in all, I feel my "onboarding" is pretty complete.

Now it's time to set the bar.

Looking to join the world of synthetic data?

Check out our current open roles on our careers page.