Hazy synthetic data quality metrics explained

Synthetic data enables fast innovation by providing a safe way to share very sensitive data, like banking transactions, without compromising privacy. After removing personal identifiers, like IDs, names and addresses, Hazy machine learning algorithms generate a synthetic version of real data that retains almost the same statistical aspects of the original data but that will not match any real record.

In other words, the synthetic data keeps all the data value while not compromising any of the privacy.

Because synthetic data is a relatively new field, many concerns are raised by stakeholders when dealing with it — mainly on quality and safety. Our most common questions are:

- How do you know that the synthetic data preserves the same richness, correlations and properties of the original data?

- How can we be sure the synthetic data is really safe and can’t be reverse engineered to disclose private information?

In order to answer these questions, Hazy has developed a set of metrics to quantify the quality and safety of our synthetic data generation. Today we will explain those metrics that will bring rigour to the discussion on the quality of our synthetic data. Another blogpost will tackle the essential privacy and security questions.

Five ways to measure synthetic data quality

It’s important to our users that they are able to verify the quality of our synthetic data before they use it in production. With this in mind, Hazy has five major metrics to assess the quality of our synthetic data generation.

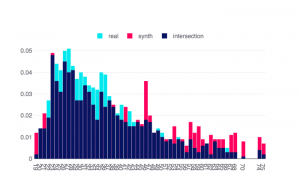

Histogram Similarity

Assuming data is tabular, this synthetic data metric quantifies the overlap of original versus synthetic data distributions corresponding to each column. If both distributions overlap perfectly this metric is 1, and it’s 0 if no overlap is found. Histogram Similarity is the easiest metric to understand and visualise. Any model should be able to generate synthetic data with a Histogram Similarity score above 0.80, with an 80 percent histogram overlap.

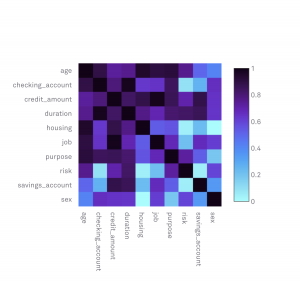

Mutual Information (MI)

Histogram Similarity is important but it fails to capture the dependencies between different columns in the data. For that purpose we use the concept of Mutual Information that measures the co-dependencies — or correlations if data is numeric — between all pairs of variables. Quantifying information is an abstract, but very powerful concept that allows us to understand the relationship between variables when we don’t have another way to achieve that.

Mutual information between a pair of variables X and Y quantifies how much information about Y can be obtained by observing variable X:

$$ MI(X;Y) = \sum_{x \in X} \sum_{y \in Y} p(x, y) log \frac{p(x, y)}{p(x)p(y)} $$

where $p(x)$ is the probability of observing x, $p(y)$ is the probability of observing y and $p(x,y)$ the probability of observing x given y. It can be shown that

$$ MI(X;Y) = H(Y) - H(Y | X) $$

Where

$$ H = - \sum_{-i} p_{i} \log_{2} p_{i} $$

is the entropy, or information, contained in each variable.

Mutual Information is not an easy concept to grasp. Let's explore the following example to help explain its meaning. Suppose we want to evaluate the Mutual Information between X (blood type) and Y (blood pressure) as a potential indicator for the likelihood of skin cancer. The following table contains hypothetical probabilities of skin cancer for all combinations of X and Y:

| X: Blood Type | |||||

|---|---|---|---|---|---|

| A | B | AB | O | ||

| Y: Blood Pressure | Very Low | 1/8 | 1/16 | 1/32 | 1/32 |

| Low | 1/16 | 1/8 | 1/32 | 1/32 | |

| Medium | 1/16 | 1/16 | 1/16 | 1/16 | |

| High | 1/4 | 0 | 0 | 0 | |

The question is: how much information does each variable contain and how much information can we get from X, given Y? Information can be counterintuitive. It is equivalent to the uncertainty or randomness of a variable. In the series of events (head, tails) of tossing a coin each realization has maximum information (entropy) — it means that observing any length of past events would not help us predict the very next event. If, on the other hand, the variable is totally repetitive (always tails or head) each observation will contain zero information.

To evaluate these quantities we simply compute the marginals of X and Y (sums over rows and columns):

$$ Marginal X = (\frac{1}{2}, \frac{1}{2}, \frac{1}{4}, \frac{1}{8}) $$

$$ Marginal Y = (\frac{1}{4}, \frac{1}{4}, \frac{1}{4}, \frac{1}{4}) $$

And then the information H for variable X is obtained by summing over the marginals of X,

$$ - \sum_{i=1, 4} pi.log_{2} (pi) = 7/4 bits. $$

The same for Y = 2 bits, so Y (blood pressure) is more informative about skin cancer than X (blood type).

The MI between X and Y is:

$$ H(X) - H(X | Y) = 2 - 11/8 = 0.375bits $$

As a side note, if X and Y are normal distributions with a correlation of $\rho$ then the mutual information will be $-\frac{1}{2}log(1-\rho^2)$ - it grows logarithmically as $\rho$ approaches 1.

The Mutual Information score is calculated for all possible pairs of variables in the data as the relative change in Mutual Information between the original to the synthetic data:

$$ MI_{score} = \sum_{i=1}^{N} \sum_{j=1}^{N} \left[ \frac{ MI(x_{i},x_{j}) } { MI(\hat{x_{i}},\hat{x_{j}}) } \right] $$

where $x$ is the original data and $\hat{x}$ is the synthetic data.

Good synthetic data should have a Mutual Information score of no less than 0.5. The next figure shows an example of mutual information (symmetric) matrix:

When we developed this MI score alongside Nationwide Building Society, we were building on the work of Carnegie Mellon University’s DoppelGANger generator, which looks to make differentially private sequential synthetic data. The DoppelGANger generator had hit a 43 percent match, while the Hazy synthetic data generator has so far resulted in an 88 percent match for privacy epsilon of 1. We are pleased to be cited as having helped improve on their exceptional work.

Predictive Capability

A further validation of the quality of synthetic data can be obtained by training a specific machine learning model on the synthetic data and test its performance on the original data. For instance, we may use the synthetic data to predict the likelihood of customer churn using, say, an XGBoost algorithm. Normally this involves splitting the data into a Training Set to train the model and a Test Set to validate the model, in order to avoid overfitting. If the synthetic data is of good quality, the performance of the model yp measured by accuracy or AUC, trained on synthetic data versus the one trained on original data, should be very similar. Note that the test set should always consist of the original data:

P C = Accuracy model trained on synthetic data / Accuracy model trained on original data

Typically Hazy models can generate synthetic data with scores higher than 0.9, with 1 being a perfect score.

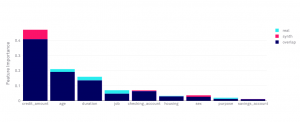

Feature Importance

This metric compares the order of feature importance of variables in the same model as trained on the original data and on trained synthetic data. Most machine learning algorithms are able to rank the variables in that data that are more informative for a specific task.

Synthetic data of good quality should be able to preserve the same order of importance of variables. In the example below, we see that within Hazy you are able to see the level of importance set by the algorithm and how accurately Hazy retains that level.

Query Quality

In some situations, synthetic data is used for reporting and business intelligence. For these cases, it is essential that queries made on synthetic data retrieve the same number of rows as on the original data. For instance, if we query the data for users above 50 years old and an annual income below £50,000, the same number of rows should be retrieved as in the original data.

This Query Quality score is obtained by running a battery of random queries and averaging the ratio of the number of rows retrieved in the original and in the synthetic data.

Remember, it’s not just about quality.

The metrics above give a good understanding of the quality of synthetic data. However, some caution is necessary as, in some cases, a few extreme cases may be overwhelmingly important and, if not captured by the generator, could render the synthetic data useless — like rare events for fraud detection or money laundering. In these cases we may need to skew the sampling mechanism and the metrics to capture these extremes.

Autocorrelation

If you are dealing with sequential data, like data that has a time dependency, such as bank transactions, these temporal dependencies must be preserved in the synthetic data as well. For instance, in healthcare the order of exams and treatments must be preserved: chemotherapy treatments must follow x-rays, CT scans and other medical analysis in a specific order and timing. The synthetic data should preserve this temporal pattern as well as replicate the frequency of events, costs, and outcomes. When talking about fraud detection, it’s important that seasonality patterns, like weekends and holidays, are preserved.

Even more challenging is the replication of seemingly unique events, like the Covid-19 pandemic, which proves itself a formidable challenge for any generative model.

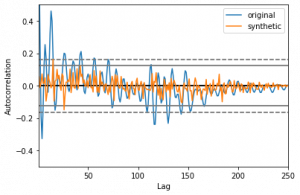

To capture these short and long-range correlations the metric of choice is Autocorrelation with a variable lag parameter. Autocorrelation basically measures how events at time $X(t)$ are related to events at time $X(t - \delta)$ where $\delta$ is a lag parameter.

The autocorrelation of a sequence $y = (y_{1}, y_{2}, ... y_{n})$ is given by:

$$ AC = \sum_{i=1}^{n-k} (y_{i} - \bar{y})(y_{i+k} - \bar{y}) / \sum_{i=1}^{n} (y_{i} - \bar{y})^2 $$

Where $\bar{y}$ is the mean of $y$ . We assume events occur at a fixed rate, but this restriction does not affect the generality of the concept.

If the events are categorical instead of numeric (for instance medical exams), the same concept still applies but we use Mutual Information instead.

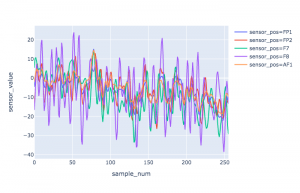

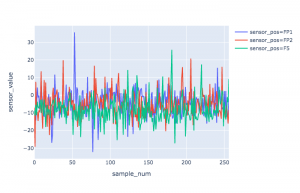

To illustrate Autocorrelation, we consider the following EEG dataset because brainwaves are entirely unique identifiers and thus exceptionally sensitive information. This dataset contains records of EEG signals from 120 patients over a series of trials. Each sample contains measurements from 64 electrodes placed on the subjects’ scalps which were sampled at 256 Hz (3.9-msec epoch) for 1 second. As can be seen in Figure 4 the data has a complex temporal structure but with strong temporal and spatial correlations that have to be preserved in the synthetic version.

For temporal data, Hazy has a set of other metrics to capture the temporal dependencies on the data that we will discuss in detail in a subsequent post.

Whatever the metric or metrics our customers choose, we are happy that they are able to check the quality of our synthetic data for themselves, building trust and confidence in Hazy’s world-class, enterprise-grade generators.